A $450K contract and a bet on the company's future as a SaaS business

Makini is an API platform for enterprise asset management (EAM) — a universal connector for systems like IBM Maximo, SAP, and Oracle that track industrial machinery and equipment. The company had two product lines: the API product (for developers building integrations) and Datafac (a services team that manually generated and migrated data for maritime customers).

Datafac was responsible for 90% of revenue but was entirely manual — operations managers would build equipment hierarchies, match assets to OEM libraries, and export data into client CMMS systems by hand. The process was slow, error-prone, and couldn't scale.

When ABS (the world's largest maritime classification society) asked us to productize this service into software they could use directly, it was both a $450K contract and a bet on the company's future as a SaaS business.

Sole PM owning the entire product lifecycle

I was the sole PM on the Datafac product team, reporting to the Head of Product. My scope covered the entire product lifecycle from discovery through launch — and extended into sales enablement, ops training, and GTM.

Stakeholders I managed across:

Understanding why the manual process broke

Before building anything, I needed to understand what was failing in the existing workflow. I interviewed the founders — who had spent a decade in maritime data — to understand deep industry pain points, spoke with key external customers who collaborated as we built, and ran a structured error analysis across all 4 operations managers, categorizing every logged issue by type and ranking them by frequency.

| Rank | Issue Category | Count |

|---|---|---|

| 1 | Process features / integration issues | 31 |

| 2 | Bugs in code | 13 |

| 3 | Wrong sorting | 9 |

| 4 | Scope change / unclear scope | 7 |

| 5 | Wrong matching with hierarchy | 7 |

| 6 | Wrong hierarchy creation | 5 |

| 7 | Wrong reference documents from client | 5 |

The #1 issue (31 occurrences) was process/integration problems — not bugs. This told me the product solution wasn't just about building UI over the existing workflow. We needed to restructure the workflow itself: clearer scoping upfront, better handoffs between stages, and guardrails against human error at each step.

I also combed through sales notes to identify the top customer asks that weren't making it into the formal product feedback process. Common themes: migrating from legacy CMMS systems, improving data quality, saving implementation cost and time, and wanting customization without losing standardization. These directly shaped what we built.

I surfaced that "scope change / unclear scope" was tied with "wrong matching" at #4. This led directly to a design decision: requiring customers to confirm their equipment list before proceeding, preventing costly rework downstream.

From manual service to self-serve platform

The product (codenamed "Ghostface" internally) needed to digitize the entire data implementation workflow. I broke the platform into distinct modules, each solving a specific stage of the process:

Hierarchy Builder

Visual drag-and-drop tool for building equipment hierarchies. Replaced manual spreadsheet work — operators could now structure assets visually instead of editing cells from scratch. Early feedback from Ecotrack helped us realize that starting customers with templates would reduce friction significantly.

OEM Library & Matching

Match equipment to Makini's universal library of OEM data (parts, jobs, manuals). Customers could also bring their own library data — a key insight from demos with BassNet, who prioritized QA above all else and wanted library content elaborated into components, parts, and jobs.

Fast Track & Pricing

Self-serve quoting: user inputs # of unique items, gets an estimate, pays 50% upfront. Sister site duplication at ~30% of lead site price. Built on Stripe with ACH/SWIFT support.

Ops Dashboard & QA

Internal tools for the operations team: request assignment, issue tracking, parsing/sourcing workflow, and quality assurance checklists — replacing Slack-based coordination.

For each module, I wrote product specs, worked with designers on UI, ran sprint planning with engineering, and validated with operations and customers. I also wrote all product copy, tooltips, and user-facing text. A key external customer, Tero Marine, collaborated throughout development — validating our document requirements, confirming terminology, and suggesting additions like inventory lists and class listings we'd initially missed.

Monthly milestones from first commit to GA

I defined the roadmap with clear milestones, negotiated scope with engineering, and managed delivery across monthly sprints. Key assumptions included no major scope changes post-design, load sheet delivery by end of each week, and 4 feedback sessions with ABS to stay aligned.

Hierarchy Builder

Visual hierarchy creation with drag-and-drop, table view, and equipment list import

Matching UI & Library

OEM library search, matching process, model number suggestions for common variations

Export, Billing & Multi-user

Custom load sheet export, Stripe integration with account balance system, role-based access

QA Tracker & GA Release

Internal and external quality assurance workflow, support center, full production launch

Post-launch iteration

Ops feedback-driven improvements, custom mapping, migration workflow, sorting/filtering across all views. Deployed 100–800+ issues over this period.

Seed-stage realities: attrition, ambiguity, and honest feedback

This was a seed-stage company building its first real product under contract pressure. Things didn't always go smoothly.

- Engineering attrition mid-project. The lead engineer left the team during development, and we were short-staffed for stretches. I had to re-scope what was achievable per sprint and renegotiate timelines without losing the ABS deadline.

- Shipped with known risks. By launch, I'd flagged 9 concerns internally — from insufficient customer validation to bugs in role-based access to the ops team not being able to navigate back between stages. I documented all of them and triaged what was launch-blocking vs. post-launch fixable.

- Honest feedback scores post-launch. The ops team rated the platform's user-friendliness at 2.6 out of 5. That was hard to hear, but I turned it into immediate action — the top requests (sorting across all views, removing pagination, adding Ctrl+F search) went into the very next sprint.

- Building for a domain I had zero background in. I'd never worked in maritime, EAM, or industrial data. I compensated by leaning heavily on the founders' decade of domain expertise, running demos with customers like Ulysses Systems (which helped us refine our ICP — smaller teams didn't need the tool, larger operations did), and spending time understanding the data structures myself.

Product decisions that shaped the outcome

Equipment confirmation gate

Required users to confirm their equipment list before processing could begin. This prevented the #4 error category (scope change / unclear scope) from causing expensive rework downstream. I wrote the confirmation copy: "You will not be able to edit equipment list after this step."

Pricing model design

Designed a usage-based pricing system: customers input # of unique equipment items, get an estimate, and pay 50% upfront. Final invoice adjusts ±15% based on actual unique items after analysis. Also introduced account balance (Stripe credits) so enterprise customers with multiple sites could pre-load funds — eliminating invoice delays that were stalling project kickoffs.

Sister site duplication

Maritime companies often have "sister ships" — vessels with 80% identical equipment. I scoped a duplication feature that cloned hierarchy data (minus serial numbers), saving customers from re-entering thousands of nearly identical records. Priced at ~30% of the original site cost.

Ops feedback survey → roadmap

After internal release, I created a structured survey for all 9 operations team members. Average efficiency rating was 3.3/5, user-friendliness 2.6/5. The top requests (sorting, Ctrl+F search, remove pagination) went directly into the next sprint.

Enabling sales with the product

Beyond building the product, I actively enabled the sales team to close deals using it. I built a demo script, created launch page specs, and proactively identified 20–30 CMMS shipping companies as outreach targets.

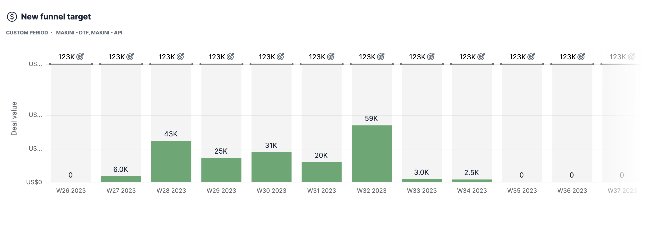

Weekly new funnel target for Datafac — deal value ramped from $0 to $59K/week at peak (W32), tracking the direct impact of having a demo-able product.

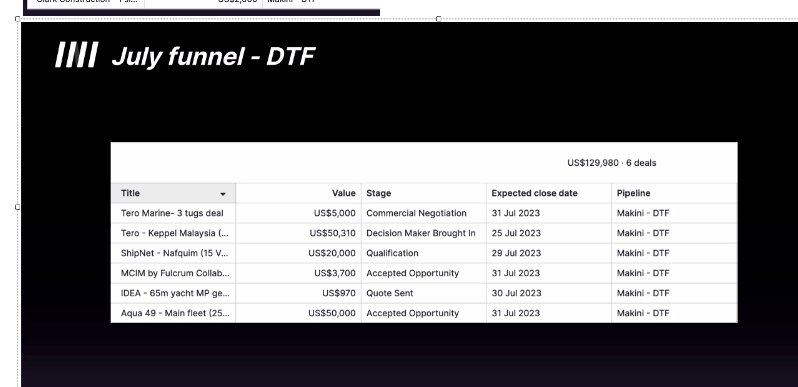

July DTF funnel: 6 deals across various stages — including Tero Marine and Aqua 49, which went on to close as part of $120K+ in follow-on revenue. These deals were directly enabled by having a working product to demo.

From manual service to the company's second product line

The platform became Makini's second revenue-generating product and was later acquired. The product validated a new business line — proving that Datafac's manual services could be productized into scalable SaaS.

What I learned building 0→1 at a seed company

Productizing services requires restructuring the workflow, not just adding UI. The error analysis showed me that the #1 problem wasn't visual tooling — it was process breakdowns. If I'd just built a pretty interface over the existing workflow, we would have digitized the same mistakes. The product needed to enforce better scoping, handoffs, and checkpoints at every stage.

You can build for a domain you don't know — if you know how to learn fast. I came in with zero maritime or EAM knowledge. What made it work was treating every stakeholder conversation as a learning opportunity: founders gave me the decade-long industry perspective, ops managers showed me where the workflow actually broke, and customers like Tero Marine corrected my assumptions about what documents mattered. By month three, I was writing product copy with the right maritime terminology and catching when engineering specs didn't match how customers actually thought about their data.

At a seed stage, PM is everything that needs to happen. I wrote product specs, tooltips, demo scripts, survey questions, confirmation copy, and sales outreach targets. There was no "that's not my job" — the role was defined by what the product needed at any given moment, not a job description. That breadth ended up being an advantage: because I touched every surface of the product, I could catch inconsistencies between what engineering was building, what ops needed, and what customers expected.

Build feedback loops before you need them. Early on, I set up a structured process for capturing sales feedback — combing through meeting notes, tracking what percentage of conversations were actually making it into the product feedback form, and holding the team accountable to logging insights. I also created the ops survey before launch, not after. When it came time to prioritize, I had real signal to work with instead of guessing. The 2.6/5 usability score, the ranked error analysis, the top 10 customer asks from sales notes — these weren't reactive. They were benchmarks I'd built ahead of time so I could measure progress and make faster decisions.